Many business leaders assume open source AI lacks the power and reliability of proprietary solutions like ChatGPT or Claude. In reality, open source AI has rapidly evolved into a cost-effective, customizable powerhouse that rivals proprietary models on most benchmarks. This guide clarifies what open source AI truly means, how it compares to proprietary alternatives, and how you can deploy it to drive business automation with greater control, lower costs, and tailored workflows. You'll discover definitions, deployment methods, performance data, practical challenges, and actionable strategies to leverage open source AI for operational efficiency.

Table of Contents

- Key takeaways

- Understanding open source AI: definition and core components

- Performance and cost benefits: open source AI versus proprietary AI

- Deploying open source AI for business automation: methods and tools

- Challenges and expert nuances in using open source AI agents

- Harness open source AI with AgentsBooks

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Open source freedoms | OSI approved licenses grant four essential freedoms including use, study, share, and modify with access to source code, model weights, and training data documentation. |

| Self hosted deployment | Open source AI can run on your own hardware or cloud and integrate with automation stacks for business workflows. |

| Performance parity | Open source models are closing the gap with proprietary models on benchmarks and can match or exceed them in key tasks. |

| Hybrid approach benefits | Using both open source and proprietary AI helps balance cost, customization, and capability gaps. |

| Safety monitoring required | Ongoing monitoring and instrumentation are vital to address limitations and instability in AI agents. |

Understanding open source AI: definition and core components

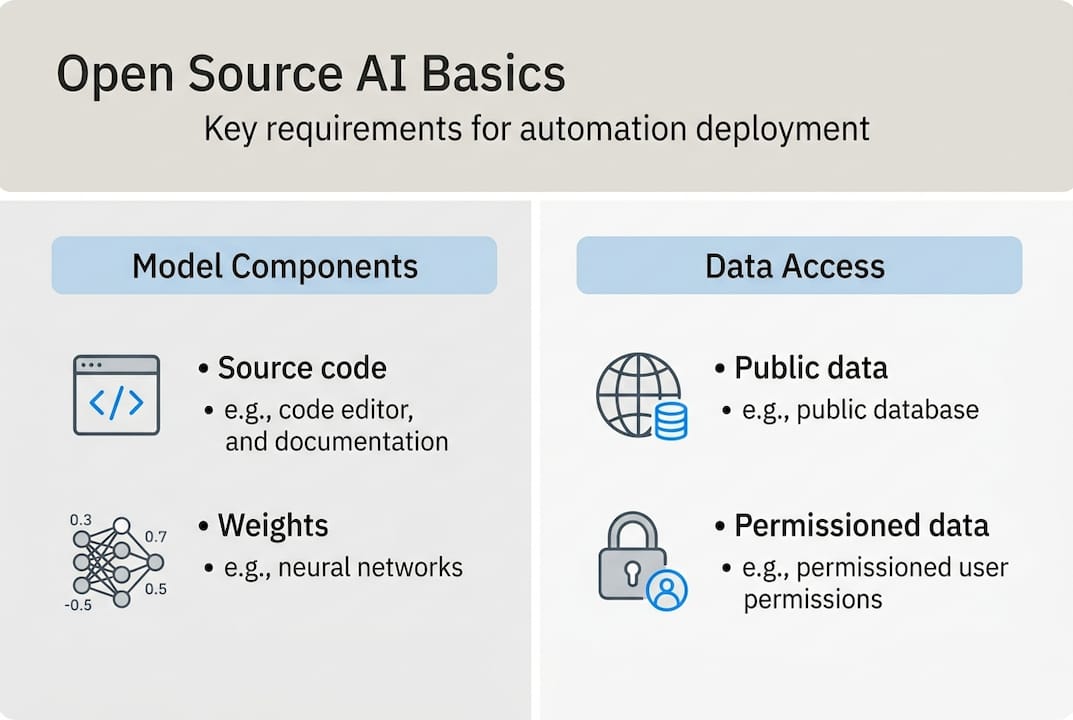

Open source AI is not just about free access to code. According to the Open Source AI Definition (OSAID 1.0), open source AI systems must be distributed under OSI-approved licenses that grant four essential freedoms: use for any purpose, study how the system works, share copies, and modify the system. These freedoms require access to the preferred form for making modifications, including source code, model weights, training data documentation, and sufficient technical details to reproduce or adapt the system.

The mechanics of open source AI differ from traditional software. You need four key components: complete source code for data processing and model training, legally shareable training data in one of four classes (public domain, permissive, reciprocal, or copyleft), model parameters and weights in accessible formats, and comprehensive documentation covering architecture, training procedures, and hyperparameters. This transparency enables businesses to audit models for bias, customize behavior for specific workflows, and verify security without relying on vendor black boxes.

Legally shareable training data falls into distinct categories. Public domain data has no copyright restrictions. Permissive licenses like MIT or Apache allow broad use with minimal obligations. Reciprocal licenses require sharing modifications under the same terms. Copyleft licenses like GPL mandate that derivative works remain open source. Understanding these distinctions helps you assess compliance risks and integration strategies when selecting open source AI models.

Transparency is the game changer. Unlike proprietary APIs where you send data to external servers and trust vendor claims, open source AI lets you inspect every layer of the model. You can verify training data sources, audit decision logic, and modify architectures to align with your industry requirements. This level of control is critical for regulated industries like finance and healthcare where explainability and data sovereignty are non-negotiable.

Pro Tip: Not all AI systems labeled "open" meet OSAID requirements fully. Before deploying, verify that the model provides access to training data documentation, weights, and source code under an OSI-approved license. Some vendors release only inference code or partial weights, limiting your ability to customize and audit the system.

Performance and cost benefits: open source AI versus proprietary AI

Open source AI has closed the performance gap with proprietary models faster than most analysts predicted. By 2026, leading open source large language models like Llama 3.1, Qwen 2.5, and Mistral Large match or exceed proprietary models on key benchmarks. The 41 open-source large language models benchmark testing report shows gaps of just 0.3 to 9 points on MMLU (Massive Multitask Language Understanding) tests, with open source models often outperforming on code generation and math reasoning tasks.

| Model | Type | MMLU Score | HumanEval (Code) | GSM8K (Math) |

|---|---|---|---|---|

| GPT-4 | Proprietary | 86.4 | 67.0 | 92.0 |

| Claude 3.5 | Proprietary | 88.7 | 73.0 | 95.0 |

| Llama 3.1 405B | Open Source | 85.2 | 61.0 | 89.0 |

| Qwen 2.5 72B | Open Source | 84.9 | 64.0 | 90.5 |

| Mistral Large 2 | Open Source | 84.0 | 59.0 | 88.0 |

Cost advantages are even more dramatic. Open source AI delivers 86-92% savings compared to proprietary API pricing when you run models locally or on your own cloud infrastructure. Proprietary models charge per token, which adds up quickly for high-volume automation tasks like customer support, content generation, or data analysis. Open source models require upfront investment in hardware or cloud compute, but total cost of ownership drops significantly at scale.

Local inference also eliminates data transfer costs and latency. When you host open source models on your servers, sensitive business data never leaves your infrastructure. This matters for compliance with GDPR, HIPAA, and other regulations that restrict data sharing with third parties. You gain privacy, control, and predictable costs without sacrificing performance on most business automation tasks.

Experts predict performance parity by mid-2026 for general language understanding and generation. Proprietary models may still lead in novel reasoning, extensive multilingual support, and cutting-edge research capabilities. However, for the majority of business automation workflows like email drafting, report summarization, and customer inquiry routing, open source AI is already sufficient. You can explore LLM performance comparisons to identify the best model for your specific use case.

"Open source AI has democratized access to powerful language models, enabling businesses to deploy customized automation agents at a fraction of proprietary costs. The performance gap is closing rapidly, and hybrid strategies that combine open source for routine tasks with proprietary models for complex reasoning deliver the best ROI." — Industry AI Analyst

Pro Tip: Use open source AI for cost-sensitive, high-volume automation workflows like content generation, data extraction, and routine customer interactions. Reserve proprietary AI for tasks requiring advanced reasoning, real-time multilingual support, or access to the latest model capabilities. This hybrid approach optimizes both budget and performance.

Deploying open source AI for business automation: methods and tools

Deploying open source AI for business automation requires choosing the right architecture for your needs. Three popular approaches offer different trade-offs between simplicity, scalability, and control: self-hosted workflow stacks, persistent autonomous agents, and sovereign AI agent factories. Each method supports continuous operation, integration with business pipelines like CRM and content systems, and orchestration of multi-step processes.

Self-hosted workflow stacks combine low-code automation platforms with local AI inference engines. Tools like n8n for workflow orchestration, Ollama for running open source LLMs locally, and Flowise for building conversational agents create a powerful, cost-effective automation environment. This setup is ideal for businesses that want to automate repetitive tasks without deep technical expertise or reliance on external APIs.

Here's how to build a self-hosted AI automation stack:

- Install n8n on your server or cloud instance to orchestrate workflows and integrate with business tools like email, databases, and APIs.

- Deploy Ollama to run open source LLMs like Llama 3.1 or Mistral locally, enabling private, low-latency AI inference without per-token costs.

- Add Flowise to create conversational AI agents that interact with customers, answer questions, and route inquiries based on context.

- Connect the components using webhooks and API calls, allowing workflows to trigger AI processing, store results, and execute follow-up actions automatically.

- Schedule workflows with cron jobs or event triggers to ensure continuous operation and timely responses to business events.

Persistent autonomous agents take automation further by running 24/7 and making decisions without human intervention. OpenClaw autonomous agents exemplify this approach, using open source LLMs to monitor inputs, execute tasks, and adapt behavior based on feedback loops. These agents integrate with business systems via APIs, maintain memory of past interactions, and coordinate with other agents to handle complex, multi-step processes.

Sovereign AI agent factories represent the most advanced deployment model. These systems use container orchestration platforms like Kubernetes, inference servers like vLLM for high-throughput model serving, and agent frameworks like LangGraph for building stateful, multi-agent workflows. Factories enable you to deploy dozens or hundreds of specialized agents, each optimized for a specific domain like sales, support, or operations. This architecture supports massive scale, fine-grained control, and seamless integration with enterprise data pipelines.

| Deployment Method | Scalability | Complexity | Best Use Cases |

|---|---|---|---|

| n8n + Ollama + Flowise | Low to Medium | Low | Workflow automation, chatbots, data processing |

| OpenClaw Agents | Medium | Medium | 24/7 monitoring, customer support, content generation |

| Sovereign Factories | High | High | Enterprise-scale automation, multi-agent orchestration |

All three approaches enable continuous operation through heartbeat monitoring and cron scheduling. Workflows can check system health, restart failed processes, and alert operators to anomalies. Integration with business pipelines happens via APIs, webhooks, and database connectors, allowing AI agents to read from CRM systems, write to content management platforms, and trigger actions in external tools. Orchestration frameworks coordinate multiple agents, ensuring tasks are distributed efficiently and dependencies are managed correctly.

You can explore AI domain expert operators and AI multi-agent teams to understand how specialized agents collaborate to automate complex business processes. These setups demonstrate the power of combining open source AI with thoughtful architecture to achieve operational efficiency at scale.

Pro Tip: Monitor agent health with automated checks and pruning to manage instability and resource use. Implement logging, error tracking, and memory limits to prevent runaway processes. Use dashboards to visualize agent performance and identify bottlenecks before they impact business operations.

Challenges and expert nuances in using open source AI agents

Despite impressive capabilities, open source AI agents face real limitations that can disrupt business operations if not managed carefully. AI agents experience instability due to looping, hallucinations, coherence loss in iterative logic, non-determinism, and vulnerability to prompt injection attacks. These failure modes stem from the fundamental nature of AI as pattern recognition systems rather than true reasoning engines.

Common pitfalls include:

- Looping occurs when agents repeat the same action indefinitely, failing to recognize task completion or detect errors in their logic.

- Hallucinations happen when models generate plausible but factually incorrect information, leading to decisions based on false premises.

- Coherence loss emerges during multi-step reasoning as context windows fill up, causing agents to lose track of original goals or contradict earlier statements.

- Non-determinism means the same input can produce different outputs, making it hard to predict agent behavior or debug failures.

- Prompt injection allows malicious users to override agent instructions by embedding commands in user input, compromising security and reliability.

AI's pattern recognition limits true understanding, impacting performance on edge cases and rare scenarios. Models trained on common patterns struggle with novel situations, distribution shifts, or tasks requiring deep domain expertise. When an agent encounters a scenario outside its training distribution, it may produce nonsensical outputs or fail silently. This is especially problematic in regulated industries where errors can have legal or financial consequences.

Risks of non-determinism and prompt injection affect agent reliability and security in production environments. Non-deterministic behavior makes it difficult to reproduce bugs or validate agent decisions. Prompt injection attacks exploit the way models process instructions, allowing attackers to manipulate agent behavior by crafting inputs that override system prompts. These vulnerabilities require robust safety measures to prevent exploitation and ensure consistent performance.

Expert recommendations for safety layers include:

- Heavy instrumentation with logging, monitoring, and alerting to detect anomalies and track agent behavior in real time.

- Real-time monitoring of agent outputs, resource usage, and error rates to identify failures before they cascade.

- Memory pruning to limit context window size, preventing coherence loss and reducing computational overhead.

- Input validation and sanitization to block prompt injection attempts and filter malicious content.

- Fallback mechanisms that revert to human oversight or simpler rule-based systems when agents encounter high-risk scenarios.

"Deploying AI agents without safety instrumentation is like driving a car without brakes. You might reach your destination, but the risk of catastrophic failure is unacceptably high. Production-grade AI systems require continuous monitoring, memory management, and fallback strategies to maintain reliability and protect business operations." — AI Safety Researcher

You can learn more about AI deployment safety and monitoring and explore reliable AI domain expert operators to understand how platforms implement these safety measures. The key is treating AI agents as probabilistic systems that require ongoing supervision rather than deterministic tools that can run unsupervised indefinitely.

Balancing the benefits of open source AI with its limitations requires a pragmatic approach. Use agents for well-defined, low-risk tasks where errors are tolerable or easily corrected. Reserve human oversight for high-stakes decisions and complex reasoning. Implement hybrid architectures that combine open source AI for routine automation with proprietary models or human judgment for critical tasks. This strategy maximizes efficiency while minimizing exposure to agent failures.

Harness open source AI with AgentsBooks

AgentsBooks empowers you to deploy AI agents tailored for operational efficiency with customizable and scalable features that align with open source principles. The platform offers AI domain expert operators that you can configure for privacy and control, running on your infrastructure or cloud environment. AI multi-agent teams facilitate collaborative automation workflows, enabling specialized agents to coordinate tasks, share context, and deliver results faster than single-agent setups.

User-friendly tools for creating and managing domain expert AI agents make it easy to get started without deep technical expertise. The platform's agent creation features guide you through defining agent roles, configuring knowledge sources, and deploying agents across multiple channels. Whether you're automating customer support, content generation, or data analysis, AgentsBooks accelerates integration of open source AI benefits into enterprise operations. Explore the platform to discover how customizable, cost-effective AI agents can transform your business workflows.

Frequently asked questions

What legal considerations should I keep in mind with open source AI?

Verify that models use OSI-approved licenses and provide access to training data, weights, and source code as required by OSAID for lawful use. Consult legal experts when deploying in commercial settings to ensure compliance with licensing terms and avoid violations. Some open source licenses impose reciprocal or copyleft obligations that require sharing derivative works.

How does open source AI compare with proprietary AI for language understanding tasks?

Open source AI matches proprietary models on many language tasks with minimal benchmark gaps by 2026, as shown in performance testing. Proprietary models may still excel in novel reasoning, extensive multilingual support, and access to the latest research advancements. For most business automation workflows, open source AI delivers sufficient performance at a fraction of the cost.

What are best practices for ensuring AI agent stability in business automation?

Implement monitoring, memory pruning, and safety instrumentation to detect and mitigate instability caused by looping, hallucinations, and coherence loss. Use hybrid approaches combining open source with proprietary AI for critical tasks requiring high reliability. Regularly test agents under real-world scenarios to detect failures early and refine safety measures. Learn more about AI deployment safety best practices.

Can I customize open source AI models to fit specific business processes?

Yes, access to source code, training data, and model weights enables deep customization for unique operational workflows. You can fine-tune models on proprietary data, adjust architectures to optimize for specific tasks, and integrate agents with internal systems via APIs. This flexibility supports deploying AI agents tailored to industry requirements, compliance standards, and business logic that proprietary APIs cannot accommodate.