Choosing the right AI agent feels overwhelming when every vendor promises revolutionary automation. Many solutions turn out to be expensive chatbots lacking true autonomous planning, leaving businesses disappointed after significant investment. You need a practical framework to evaluate AI agents and distinguish genuine productivity tools from overhyped software. This guide delivers clear criteria to assess AI agent capabilities, reveals measurable benefits backed by empirical research, and shows you how to deploy these systems safely. By understanding what separates real autonomous agents from simple dialogue systems, you'll make informed decisions that unlock substantial productivity gains for your team and workflows.

Table of Contents

- Key takeaways

- Evaluating AI agents: essential criteria to unlock real benefits

- Top AI agent benefits: productivity gains and workflow improvements

- Risks and challenges in deploying AI agents effectively

- Comparing AI agents: performance benchmarks and real-world task autonomy

- Explore advanced AI agents with AgentsBooks

- FAQ

Key Takeaways

| Point | Details |

|---|---|

| Autonomy distinction | True AI agents can plan, commit to goals, and execute multi-step tasks without constant human direction. |

| ABC framework | The ABC criteria evaluate Action, Brain, and Context to distinguish genuine autonomous agents from enhanced chatbots. |

| Human in the loop | Effective deployments combine guardrails and human oversight to sustain reliability across complex workflows. |

| Domain specialization | Industry expert agents outperform generalist systems by aligning with terminology, workflows, and constraints. |

Evaluating AI agents: essential criteria to unlock real benefits

True AI agents differ fundamentally from chatbots in their ability to plan, commit to goals, and execute multi-step tasks without constant human direction. Many agents are expensive chatbots lacking planning because they miss the critical planning component that enables genuine autonomy. Understanding this distinction saves you from costly implementation mistakes.

The ABC framework provides a clear lens for evaluation. Action refers to the agent's ability to interact with systems and execute tasks beyond conversation. Brain means the agent possesses planning capabilities to break down complex goals into executable steps and adapt when obstacles arise. Context encompasses the agent's awareness of its environment, available tools, and relevant information needed to make intelligent decisions. Without all three components working together, you're dealing with an enhanced chatbot rather than a true autonomous agent.

Simplistic agent designs create hidden costs that erode the promised productivity benefits. Agents without robust error handling require constant human intervention, turning automation into a monitoring burden. Poorly designed systems lack the commitment mechanisms needed to persist through multi-step workflows, abandoning tasks at the first sign of complexity. These failures waste engineering time and damage stakeholder confidence in AI automation initiatives.

When evaluating potential solutions, focus on these critical dimensions:

- Autonomy level: Can the agent complete multi-step tasks without human checkpoints at every stage?

- Planning sophistication: Does it break down complex goals and adapt plans when circumstances change?

- Error handling: How does the system respond to unexpected inputs, API failures, or ambiguous situations?

- Context retention: Does the agent maintain awareness across extended interactions and multiple tasks?

- Scalability: Can you deploy multiple agents without exponential increases in oversight requirements?

Pro Tip: Request concrete examples of completed workflows during vendor demos. Ask to see how the agent handles failures, not just success cases. This reveals whether you're evaluating a true autonomous system or a scripted demo designed to impress.

Domain specialization matters significantly for practical deployment. AI domain expert operators trained on specific business contexts outperform generalist agents because they understand industry terminology, common workflows, and relevant constraints. An agent designed for customer support needs different capabilities than one built for code review or financial analysis.

Top AI agent benefits: productivity gains and workflow improvements

Agent-centric workflow redesign drives productivity increases of 2 to 10 times by fundamentally rethinking how work gets done. These gains emerge not from simply automating existing processes but from redesigning workflows around agent capabilities. Organizations that achieve the highest productivity multiples restructure tasks to leverage agent strengths while routing complex judgment calls to humans.

Empirical research demonstrates task time reductions of 15 to 50 percent across diverse professional roles. Writers using AI agents complete drafts 40 percent faster while maintaining quality standards. Customer support teams resolve routine inquiries in half the time, freeing specialists to handle complex cases requiring empathy and creative problem solving. Software developers accelerate code review and documentation tasks by 25 to 35 percent, redirecting energy toward architecture and innovation.

Specific benefits vary by role and implementation approach:

- Writing and content creation: Agents handle research, outline generation, and first draft production, reducing time from ideation to publication

- Customer support: Automated triage, response drafting, and knowledge base queries enable AI customer support agents to resolve 60 to 70 percent of routine inquiries

- Sales and business intelligence: Agents synthesize data from multiple sources, generate insights, and prepare presentation materials

- Software development: Code review, test generation, documentation updates, and dependency management tasks become largely autonomous

- Administrative workflows: Scheduling, email management, data entry, and report compilation run without human intervention

One audit team reduced reporting time by 92 percent after implementing agents trained on compliance requirements and data extraction protocols. The agents handled routine verification tasks while flagging anomalies for human review. This dramatic improvement came from redesigning the audit workflow rather than simply automating existing manual steps.

Scale advantages emerge as organizations deploy multiple specialized agents. A coordinated AI workforce handles parallel workstreams that would overwhelm human teams. Five agents can simultaneously research different market segments, analyze competitor strategies, and compile findings into a unified report. This parallel processing capability compresses project timelines from weeks to days.

Critical insight: Organizations achieving 5 to 10 times productivity gains restructure roles around agent collaboration rather than treating AI as a simple productivity multiplier for existing jobs.

The compounding effect of multiple efficiency improvements creates outsized returns. When agents handle research, drafting, and formatting tasks, professionals spend 70 percent of their time on high-value activities like strategy, relationship building, and creative problem solving. This shift in time allocation drives both productivity metrics and job satisfaction improvements.

Risks and challenges in deploying AI agents effectively

AI agents introduce failure modes that differ fundamentally from traditional software errors. Hallucinations, brittleness, and coordination failures represent the most common edge cases requiring mitigation strategies. Hallucinations occur when agents generate plausible but factually incorrect information, creating downstream problems that compound across workflows. Brittleness means agents fail unpredictably when encountering inputs slightly outside their training distribution.

Error propagation poses particular risks in multi-agent systems. When one agent produces flawed output that feeds into another agent's workflow, mistakes amplify rather than cancel out. A research agent that misinterprets a data source can cause a reporting agent to build an entire analysis on false premises. These cascading failures require robust validation checkpoints and human oversight at critical junctures.

Common failure patterns to anticipate:

- Context window limitations causing agents to lose critical information in extended interactions

- Overconfidence in uncertain situations, leading to decisive action based on insufficient data

- Difficulty handling ambiguous instructions or conflicting objectives

- Inability to recognize when a task exceeds agent capabilities, resulting in poor quality output rather than escalation

- Coordination breakdowns when multiple agents work on interdependent tasks

Mitigation requires layered defenses combining technical guardrails with human oversight protocols. Automated evaluation systems continuously test agent outputs against known standards, catching errors before they reach production workflows. Confidence scoring helps agents recognize uncertainty and request human guidance rather than proceeding blindly. Output validation checks ensure generated content meets basic quality and factual accuracy thresholds.

Human-in-the-loop strategies balance automation benefits with safety requirements. Critical decisions, sensitive communications, and high-stakes tasks require human review before execution. This doesn't eliminate productivity gains because agents still handle research, drafting, and preparation work. Humans focus their time on judgment calls and final approval rather than routine execution.

Pro Tip: Implement staged rollouts starting with low-risk tasks where errors create minimal consequences. Monitor failure patterns closely during initial deployment and refine guardrails based on observed edge cases. This iterative approach builds institutional knowledge about agent limitations while delivering incremental value.

Ongoing monitoring remains essential even after successful initial deployment. Agent behavior can drift as underlying models update or as the task environment changes. Regular audits of agent outputs, user feedback collection, and performance metric tracking ensure systems continue meeting quality standards. Organizations treating AI agent deployment as a continuous improvement process achieve better long-term outcomes than those implementing once and assuming stability.

"The organizations seeing the greatest success with AI agents maintain healthy skepticism, implement robust testing frameworks, and view deployment as an ongoing process of refinement rather than a one-time implementation project."

Comparing AI agents: performance benchmarks and real-world task autonomy

Benchmark data reveals significant performance variation across AI agent implementations. TheAgentCompany benchmark shows top agents complete 30 percent of real-world professional tasks fully autonomously, establishing a realistic baseline for current capabilities. This completion rate reflects complex, multi-step workflows typical of actual business environments rather than simplified test scenarios.

| Agent Category | Autonomous Task Completion | Setup Complexity | Cost Range | Best Use Cases |

|---|---|---|---|---|

| Specialized domain agents | 40 to 55 percent | Medium | $200 to $800/month | Customer support, content creation, data analysis |

| General-purpose assistants | 20 to 35 percent | Low | $50 to $300/month | Email management, scheduling, research |

| Custom-built enterprise agents | 45 to 60 percent | High | $2,000+/month | Complex workflows, proprietary systems, compliance-heavy processes |

| Multi-agent orchestration platforms | 35 to 50 percent | Medium to High | $500 to $2,000/month | Cross-functional projects, parallel workstreams |

Performance metrics tell only part of the story. Deployment complexity, maintenance requirements, and integration capabilities significantly impact real-world value. Specialized agents excel at narrow tasks but require multiple implementations to cover diverse business needs. General-purpose assistants offer broader capabilities with simpler setup but lower autonomous completion rates for complex workflows.

Key trade-offs when comparing options:

- Specialization versus flexibility: Domain-specific agents outperform generalists in their niche but create integration challenges across workflows

- Setup investment versus time-to-value: Custom solutions deliver higher performance but require months of configuration and training

- Vendor lock-in versus best-of-breed: Integrated platforms simplify management but may underperform specialized point solutions

- Transparency versus performance: More interpretable agents enable better debugging but may sacrifice cutting-edge capabilities

Enterprise deployments benefit from examples of autonomous agents in business workflows that demonstrate practical implementation patterns. Organizations achieving the highest ROI typically deploy a portfolio approach, combining specialized agents for high-volume tasks with general-purpose assistants for ad hoc needs. This hybrid strategy balances performance optimization with operational simplicity.

Cost analysis requires looking beyond subscription fees to total cost of ownership. Agent systems demanding extensive human oversight erode productivity gains through monitoring burden. Solutions requiring frequent retraining or configuration updates consume engineering resources that could drive other initiatives. Factor in these hidden costs when comparing options with different autonomy levels and maintenance requirements.

Interpret benchmarks cautiously because testing conditions rarely match your specific environment. An agent scoring 50 percent on standardized tasks might achieve 70 percent in your workflow if it aligns well with the agent's training, or drop to 30 percent if your processes involve unique tools or requirements. Pilot programs testing agents on actual work samples provide more reliable performance indicators than vendor-supplied benchmarks.

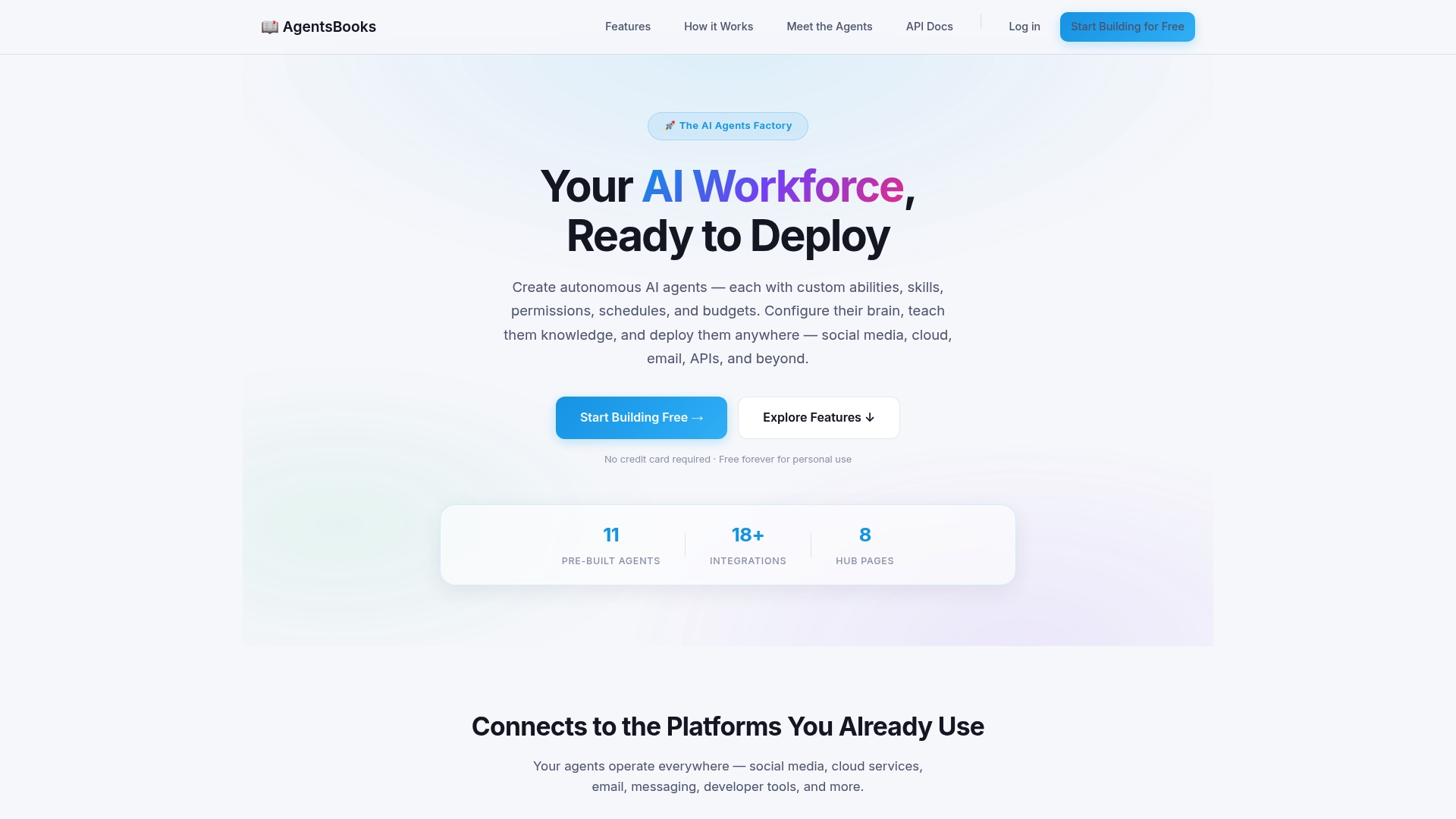

Explore advanced AI agents with AgentsBooks

Turning these insights into action requires a platform designed for practical AI agent deployment. AgentsBooks provides comprehensive tools to build, customize, and manage autonomous agents tailored to your specific business needs. The platform supports everything from simple task automation to sophisticated multi-agent workflows, with built-in guardrails and monitoring to ensure safe, effective deployment.

Whether you need AI domain expert operators for specialized tasks or AI multi-agent teams for complex cross-functional projects, AgentsBooks delivers the flexibility and control required for production environments. The platform emphasizes practical implementation over theoretical capabilities, with developer-friendly APIs, extensive documentation, and proven deployment patterns. Explore how leading organizations leverage AgentsBooks to achieve the productivity gains and workflow improvements discussed throughout this guide.

FAQ

What are AI agents and how do they differ from chatbots?

AI agents autonomously plan and execute multi-step tasks using the ABC framework: Action capabilities to interact with systems, Brain functions for intelligent planning and adaptation, and Context awareness to make informed decisions. Chatbots primarily handle conversational interactions without true autonomy or goal-directed behavior. While chatbots respond to user prompts, AI agents proactively work toward objectives, adjusting their approach when obstacles arise and persisting through complex workflows without constant human direction.

How much productivity improvement can I expect using AI agents?

Empirical studies show productivity gains of 2 to 10 times when organizations redesign workflows around agent capabilities rather than simply automating existing processes. Typical time savings range from 15 to 50 percent across writing, customer support, and software development tasks. The highest gains come from restructuring roles to leverage agent strengths for routine execution while focusing human effort on judgment, creativity, and relationship building. Results vary significantly based on implementation quality, workflow design, and task complexity.

What are the main risks when deploying AI agents?

Common risks include hallucinations, brittleness, and coordination failures that can compromise output quality and create downstream problems. Hallucinations produce plausible but incorrect information, while brittleness causes unpredictable failures with unfamiliar inputs. Mitigation requires guardrails like automated validation, confidence scoring, and output quality checks combined with human-in-the-loop oversight for critical decisions. Organizations achieving safe deployment implement staged rollouts, continuous monitoring, and iterative refinement based on observed failure patterns.

How do I choose between specialized and general-purpose AI agents?

Specialized agents deliver 40 to 55 percent autonomous task completion in their domain compared to 20 to 35 percent for general-purpose assistants, but require more complex integration when covering diverse business needs. Choose specialized agents for high-volume, well-defined workflows where performance justifies setup investment. General-purpose assistants work better for ad hoc tasks, exploratory projects, or organizations just beginning AI agent adoption. Many successful deployments use a portfolio approach, combining specialized agents for core workflows with flexible assistants for varied tasks.