Deploying AI agents at scale once required massive infrastructure investments and specialized engineering teams. Today, cloud services have democratized AI automation, enabling businesses of any size to launch sophisticated agent networks without building data centers or managing complex hardware. Cloud-based AI solutions offer scalable and cost-effective platforms that transform how organizations deploy intelligent automation across digital channels. This guide reveals how leading cloud platforms eliminate traditional barriers, providing the infrastructure, security, and tools needed to operationalize AI agents rapidly and reliably in 2026.

Table of Contents

- Why Cloud Services Are Essential For AI Automation And Agent Deployment

- Comparing The Top Cloud AI Agent Platforms: AWS Bedrock, Google Vertex AI, And Azure AI Foundry

- Architectural Best Practices: Building Reliable, Scalable AI Agents With Cloud-Native Technologies

- Security And Compliance Considerations When Deploying AI Agents In The Cloud

- Explore AgentsBooks For AI Agent Development And Deployment

- What Is The Role Of Infrastructure As Code (Iac) In AI Agent Deployment?

- How Do Cloud AI Platforms Ensure Secure AI Agent Hosting?

- Which Cloud AI Agent Platform Is Best For Businesses Already Using AWS Or Azure?

Key takeaways

| Point | Details |

|---|---|

| Cloud platforms enable scalable deployment | Modern cloud services provide auto-scaling infrastructure that adjusts resources dynamically as AI agent workloads fluctuate. |

| Leading providers offer consumption-based pricing | AWS Bedrock, Google Vertex AI, and Azure AI Foundry charge only for actual compute usage, eliminating upfront infrastructure costs. |

| Infrastructure as Code accelerates deployment | IaC tools like CloudFormation and Terraform automate provisioning, reducing deployment time and configuration errors. |

| Security is built into cloud AI services | Enterprise-grade identity controls, session isolation, and compliance frameworks protect AI workloads from design through operation. |

| Platform choice depends on existing ecosystem | Selecting AWS, Google, or Azure AI services should align with your current cloud infrastructure for seamless integration. |

Why cloud services are essential for AI automation and agent deployment

AI agents demand computational resources that vary wildly based on user interactions, data processing loads, and concurrent tasks. Traditional on-premises infrastructure forces you to provision for peak capacity, leaving expensive hardware idle during low-demand periods. Cloud platforms solve this through elastic scaling that matches resources to real-time needs.

The economic advantage is substantial. Pay-as-you-go pricing means you invest only in actual compute cycles consumed, not theoretical maximum capacity. When your AI agent handles 100 requests per hour versus 10,000, your infrastructure costs scale proportionally. This consumption model transforms AI deployment from a capital expenditure into an operational expense that aligns perfectly with business growth.

Serverless architectures take this further by abstracting infrastructure management entirely. Your development team focuses on agent logic and capabilities rather than server configuration, patching, or capacity planning. Containerization through services like AWS Fargate or Google Cloud Run packages AI agents with their dependencies, ensuring consistent behavior across development, testing, and production environments.

Multi-agent architectures become practical in cloud environments. You can deploy specialized agents for customer service, data analysis, content generation, and workflow automation, each scaling independently based on demand. These agents communicate through managed message queues and APIs, creating sophisticated automation networks without the complexity of coordinating physical infrastructure.

Pro Tip: Start with serverless deployment for new AI agents to minimize operational overhead, then migrate to containerized solutions only when you need fine-grained control over runtime environments or have specific performance requirements that serverless cannot meet.

The AI agent deployment guide 2026 demonstrates how businesses leverage cloud infrastructure to launch production-ready agents in days rather than months. Cloud services handle the undifferentiated heavy lifting of infrastructure management, letting your team concentrate on creating agents that deliver genuine business value.

Comparing the top cloud AI agent platforms: AWS Bedrock, Google Vertex AI, and Azure AI Foundry

Three major platforms dominate the cloud AI agent landscape, each offering distinct advantages for different deployment scenarios. Understanding their capabilities helps you select the platform that best fits your technical stack and business requirements.

| Platform | Key Strengths | Pricing Model | Best For |

|---|---|---|---|

| AWS Bedrock | Seamless AWS integration, serverless runtime, session isolation | Consumption-based, I/O wait free | AWS-native teams needing secure, scalable agent deployment |

| Google Vertex AI | Multimodal capabilities, 7M+ downloads, extensive adoption | Usage-based compute | Organizations prioritizing ML model variety and Google Cloud integration |

| Azure AI Foundry | Enterprise security, workflow integration, built-in observability | Enterprise licensing | Microsoft ecosystem users requiring compliance-ready solutions |

AWS Bedrock AgentCore provides a serverless foundation that eliminates infrastructure management while maintaining enterprise-grade security. The platform uses consumption-based pricing where I/O wait is free, charging only for active processing time. This makes Bedrock particularly cost-effective for agents with variable workloads or long-running tasks that include waiting periods.

Google Vertex AI Agent Builder has achieved remarkable adoption, with the Agent Development Kit reaching 7M+ downloads. The platform excels at multimodal AI applications, supporting text, image, and video processing within unified agent workflows. Organizations already leveraging Google Cloud services benefit from native integration with BigQuery for data analysis and Cloud Storage for knowledge bases.

Azure AI Foundry targets enterprise deployments where security, compliance, and governance are non-negotiable. The platform provides built-in observability tools that track agent behavior, decision paths, and performance metrics without requiring custom instrumentation. For organizations operating in regulated industries, Azure's compliance certifications and audit capabilities significantly reduce the burden of meeting regulatory requirements.

Recent innovations continue differentiating these platforms. Databricks Mosaic AI introduced Storage-Optimized Vector Search with 7x lower cost compared to previous solutions, demonstrating how platform competition drives better economics for AI deployment.

Pro Tip: Evaluate platforms based on your existing cloud commitments first. The integration benefits of staying within a single cloud ecosystem typically outweigh marginal feature differences between providers.

The Amazon Bedrock vs Google Vertex AI comparison provides detailed technical analysis of these platforms, helping you map specific capabilities to your deployment requirements. Your choice should prioritize seamless integration with existing tools and services rather than chasing individual features.

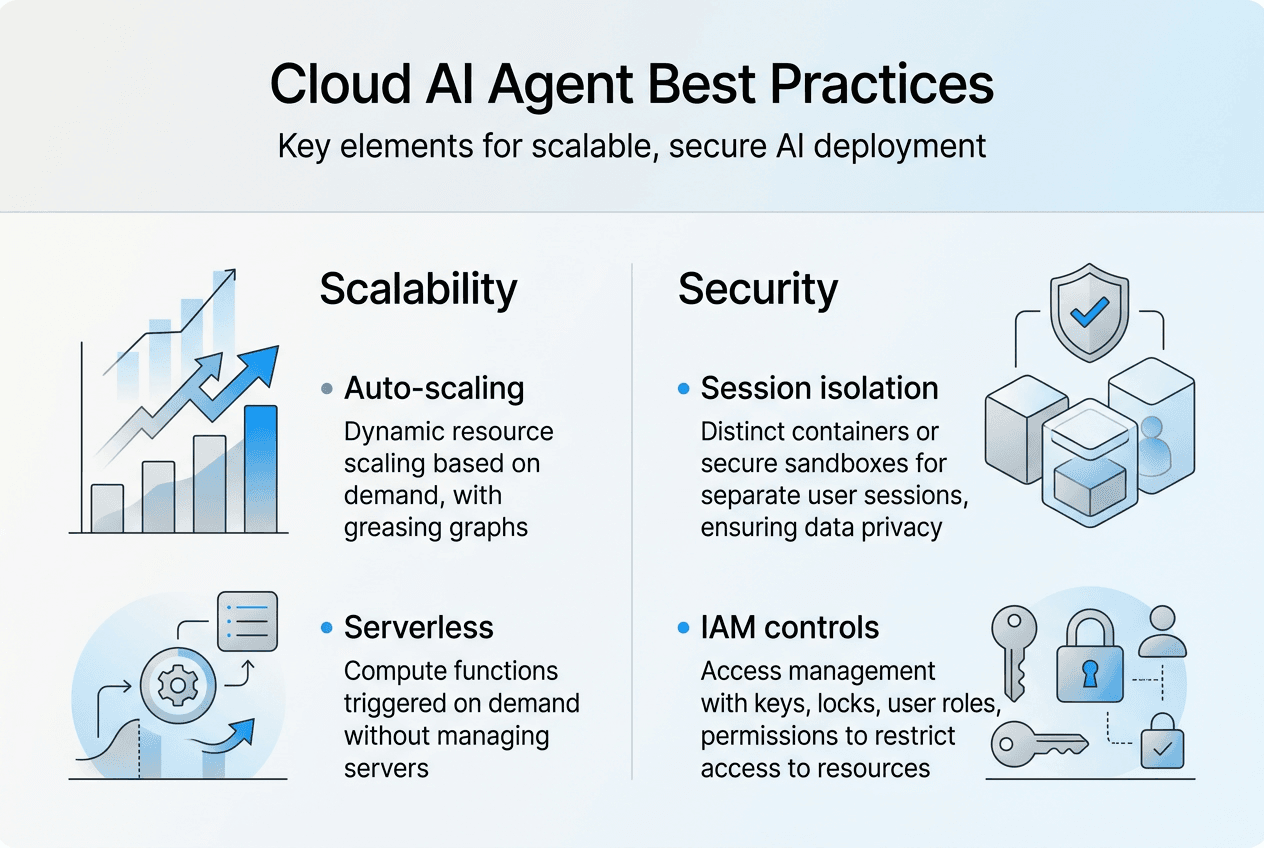

Architectural best practices: building reliable, scalable AI agents with cloud-native technologies

Production-grade AI agents require thoughtful architecture that balances performance, reliability, and maintainability. Cloud-native patterns provide proven frameworks for building systems that scale gracefully and recover from failures automatically.

-

Implement Infrastructure as Code for all provisioning and configuration. IaC facilitates consistent, secure, and scalable infrastructure while minimizing manual errors that plague traditional deployment processes. Tools like AWS CloudFormation or Terraform codify your entire infrastructure, enabling version control, peer review, and automated rollback when issues arise.

-

Establish CI/CD pipelines that automate testing and deployment. GitHub Actions streamlines deployment in compliance with security standards, ensuring every code change passes automated security scans, unit tests, and integration validation before reaching production. This automation eliminates the manual handoffs that introduce delays and errors.

-

Adopt microservices architecture to isolate agent capabilities into independent, deployable units. Each microservice handles a specific function like natural language processing, data retrieval, or external API integration. This modularity lets you update individual components without redeploying entire systems, accelerating iteration cycles and reducing deployment risk.

-

Design for failure with retry logic, circuit breakers, and graceful degradation. AI agents interact with external services that may experience latency spikes or temporary outages. Implementing exponential backoff for retries and fallback behaviors ensures your agents continue providing value even when dependent services falter.

-

Containerize agents to ensure consistency across environments. Docker containers package your agent code with exact dependency versions, eliminating the configuration drift that causes "works on my machine" problems. Container orchestration through Kubernetes or managed services like AWS ECS handles scaling, health monitoring, and automatic recovery.

Infrastructure as Code transforms AI deployment from artisanal craft into repeatable engineering practice, enabling teams to provision complex agent architectures in minutes rather than weeks.

Security and compliance must be architectural concerns from day one, not afterthoughts bolted onto existing systems. Implementing identity and access management, encryption at rest and in transit, and comprehensive logging as foundational elements prevents costly retrofits when compliance requirements emerge.

The AI domain expert operators use case demonstrates how properly architected agents operate autonomously while maintaining visibility and control. Similarly, the AI DevOps engineering agent showcases automation patterns that reduce operational burden without sacrificing reliability.

Pro Tip: Invest time in observability from the start by instrumenting agents with structured logging, metrics collection, and distributed tracing. The debugging time you save when issues arise will more than justify the initial implementation effort.

Security and compliance considerations when deploying AI agents in the cloud

Security breaches involving AI systems carry amplified consequences because agents often access sensitive data and execute privileged operations autonomously. Cloud platforms recognize this reality by embedding security controls throughout their AI services rather than treating security as an optional add-on.

Azure AI Foundry Agent Service enables secure design and deployment with built-in observability and compliance-ready frameworks for enterprise operations. The platform provides guardrails that prevent agents from accessing unauthorized resources or executing dangerous operations, even if prompt injection or other attacks attempt to manipulate agent behavior.

Session isolation represents a critical security boundary. AWS implements dedicated microVMs for individual user sessions, ensuring that one user's interactions cannot leak information to another user's session. This architectural approach prevents cross-session contamination that could expose confidential data or enable lateral movement by attackers.

Identity and access management forms the foundation of cloud AI security. Implementing least-privilege principles means granting agents only the specific permissions required for their designated tasks. An agent handling customer inquiries needs read access to product catalogs but should never possess write access to pricing databases or customer payment information.

The scale of security investment by major cloud providers creates advantages difficult to replicate internally. Microsoft employs 34,000 engineers and 15,000 partners dedicated to security, continuously monitoring threats and updating defenses across their cloud infrastructure. This collective security intelligence benefits all customers deploying AI agents on the platform.

Compliance certifications reduce the burden of meeting regulatory requirements. Cloud platforms maintain SOC 2, ISO 27001, HIPAA, and industry-specific certifications, providing documentation and controls that satisfy auditors. Deploying on certified infrastructure accelerates your own compliance efforts rather than building everything from scratch.

- Implement comprehensive logging that captures agent decisions, data access, and external interactions for audit trails

- Encrypt data at rest using platform-managed keys or bring your own key for additional control

- Enable multi-factor authentication for all administrative access to agent management interfaces

- Conduct regular security reviews of agent permissions and remove unnecessary access grants

- Establish incident response procedures specific to AI agent security events

Pro Tip: Treat AI agent credentials with the same rigor as database passwords or API keys. Rotate credentials regularly, never hardcode them in source code, and use secret management services like AWS Secrets Manager or Azure Key Vault to inject credentials at runtime.

The cloud AI management 2026 guide explores governance frameworks that balance security requirements with operational agility, helping you implement controls without creating bottlenecks that slow legitimate development.

Explore AgentsBooks for AI agent development and deployment

Transitioning from cloud infrastructure knowledge to practical agent creation requires tools that simplify the development process while leveraging cloud capabilities effectively. AgentsBooks provides a comprehensive platform for building, customizing, and operating AI agents that integrate seamlessly with cloud services.

The platform enables you to construct agents through descriptive profiles rather than complex coding, making AI automation accessible to teams without deep machine learning expertise. You configure agent abilities, knowledge sources, and behavioral parameters through intuitive interfaces, then deploy across social media, email, APIs, and messaging platforms with minimal friction.

AI multi-agent teams demonstrate how collaborative agent networks tackle complex workflows by distributing specialized tasks across multiple autonomous units. This architectural pattern mirrors the microservices approach discussed earlier, creating resilient systems where individual agent failures do not cascade into total system breakdowns. The platform provides REST APIs, SDKs, and open-source code for developers requiring programmatic control, positioning AgentsBooks as a developer-friendly solution that scales from simple automation to enterprise AI workforce orchestration.

What is the role of Infrastructure as Code (IaC) in AI agent deployment?

How does IaC improve AI agent deployment reliability?

IaC automates provisioning and configuration management, eliminating manual setup errors that cause deployment failures and security vulnerabilities. Teams can version control infrastructure definitions just like application code, enabling rollback to known-good configurations when issues arise. The AWS CloudFormation for AI agents approach demonstrates how declarative templates create reproducible environments across development, staging, and production.

What IaC tools work best for cloud AI deployments?

AWS CloudFormation integrates natively with AWS services, providing deep integration for Bedrock and other AWS AI tools. Terraform offers cloud-agnostic templates that work across AWS, Google Cloud, and Azure, ideal for multi-cloud strategies. Pulumi enables infrastructure definition using familiar programming languages like Python or TypeScript, reducing the learning curve for development teams.

Can IaC handle complex multi-agent architectures?

Modern IaC tools support modular templates that compose simple components into sophisticated systems. You define individual agent infrastructure as reusable modules, then orchestrate multiple agents with shared resources like databases, message queues, and API gateways. This modularity mirrors software engineering best practices, making complex architectures maintainable as they evolve.

How do cloud AI platforms ensure secure AI agent hosting?

What security mechanisms protect AI agents in cloud environments?

Providers implement session isolation through dedicated compute environments, preventing cross-contamination between user interactions. Identity controls enforce least-privilege access, ensuring agents operate only within authorized boundaries. Encryption protects data at rest and in transit, while compliance frameworks provide audit trails and governance controls. The cloud AI security and compliance guide details these layered defenses.

How do cloud platforms prevent AI agent prompt injection attacks?

Platforms implement input validation that sanitizes user prompts before processing, filtering malicious instructions that attempt to manipulate agent behavior. Guardrails define acceptable operation boundaries, automatically blocking requests that violate security policies. Continuous monitoring detects anomalous patterns indicating potential attacks, triggering automated responses that isolate compromised agents.

Are cloud AI platforms compliant with data protection regulations?

Major platforms maintain certifications for GDPR, HIPAA, SOC 2, and industry-specific regulations. They provide data residency controls that keep information within specified geographic regions, satisfying localization requirements. Compliance documentation and audit support reduce the burden on organizations deploying regulated AI applications.

Which cloud AI agent platform is best for businesses already using AWS or Azure?

Should AWS users choose Bedrock for AI agents?

AWS Bedrock integrates seamlessly with existing AWS services like S3 for storage, Lambda for serverless functions, and IAM for identity management. This native integration eliminates complex cross-platform authentication and reduces data transfer costs. Teams familiar with AWS tools can leverage existing expertise rather than learning new platforms.

What advantages does Azure AI Foundry offer Microsoft ecosystem users?

Azure AI Foundry connects directly with Microsoft 365, Dynamics, and Power Platform, enabling AI agents to access enterprise data and workflows without custom integration work. Organizations using Azure Active Directory benefit from unified identity management across AI and traditional applications. The cloud AI platform comparison highlights these ecosystem advantages.

Can you mix cloud AI platforms or should you standardize on one?

Multi-cloud strategies add complexity through disparate tools, authentication systems, and operational procedures. Most organizations achieve better results by standardizing on the platform aligned with their primary cloud infrastructure. The integration benefits and operational simplicity of a single platform typically outweigh the marginal advantages of mixing providers for specific features.